Sign Language Interpreter

Watch our demo below!

Our project, focused on American Sign Language (ASL) recognition, draws inspiration from Buolamwini’s ideals. We aim to create algorithms that give mute and deaf individuals a “voice” in spaces where interpreters are unavailable, helping them communicate more freely in everyday and professional life. This project is not only technical but also ethical: it embodies the principle that technology should empower marginalized communities rather than exclude them.

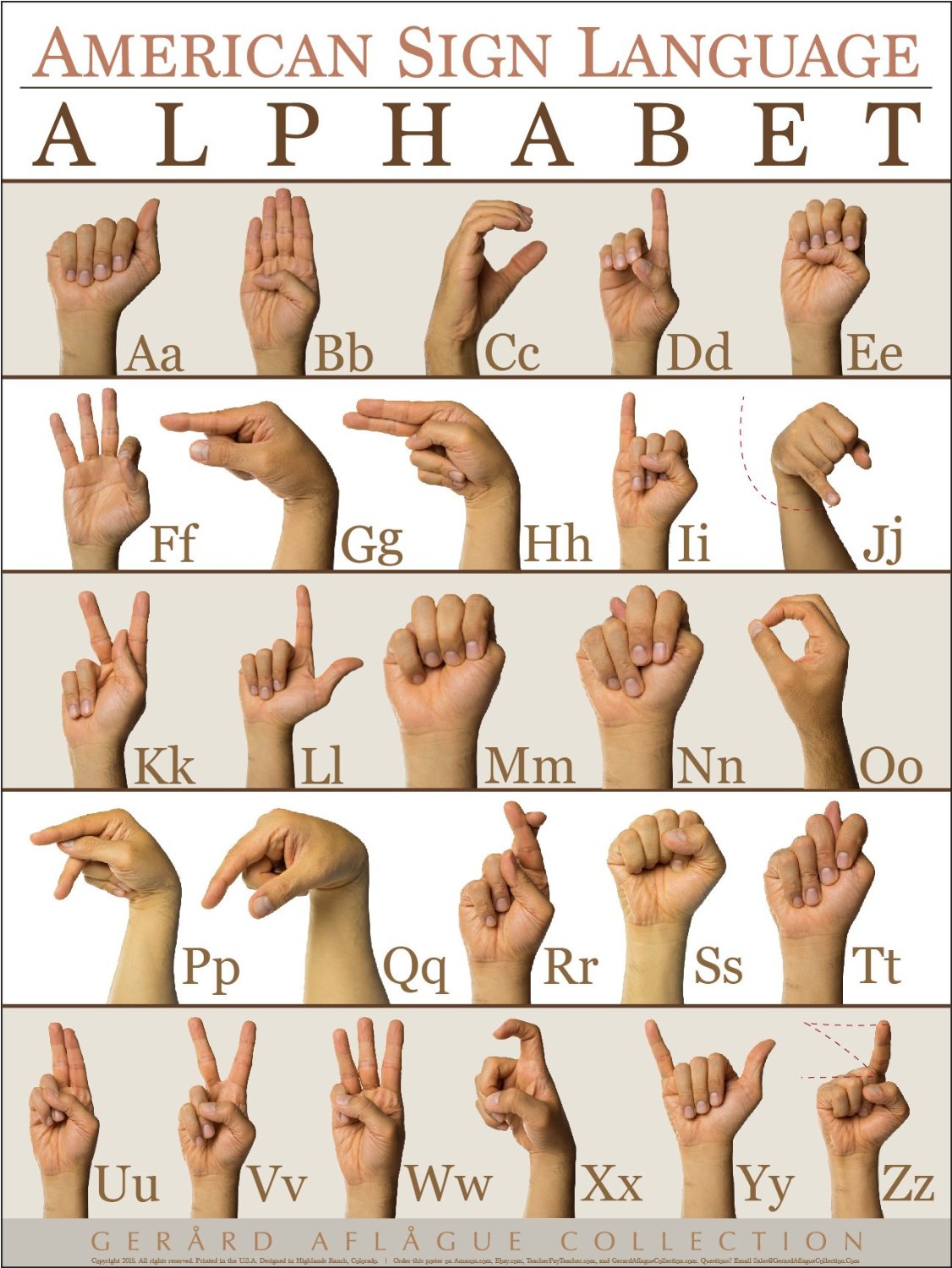

ASL Alphabet Reference

This is an example of the ASL alphabet. You can take a look and try these signs with our model below. To find out more about ASL, click here.

Technical Process

We used Google’s Teachable Machine to prototype recognition. The tool allowed us to train on snapshots of hand shapes but not motion. We tested multiple hand positions for letters at the beginning of the alphabet, but the model became too large to export fully. Despite these constraints, we prioritized inclusivity over efficiency.

This mirrors Buolamwini’s lesson that justice must guide design choices. Even when technical limitations exist, we chose to emphasize accessibility for diverse users rather than optimizing for speed or compactness. Our documentation includes the classes trained, dataset counts per class, and environmental conditions (lighting/background). This recordkeeping supports future audits and collaborative improvement.